Abstract

Combine tessellating Worley and smooth noise patterns with Beer’s Law and the Henyey-Greenstein phase function to model ray-tracing through cloud formations of varying densities, sample rates, and light properties.

Technical approach

Clouds have a characteristic shape in the popular imagination. One approach to generating the cloud is using a combination of 3D Worley noise and smooth noise. Combined in three dimensions – those noises result in cloud like appearance. Texture is then passed to an OpenGL shader for ray tracing and ray marching. Stripped down version of Project 4: Cloth Simulator code was used as a starting point.

Generating Cloud Textures

The process starts with seeding a bounding box with numerous random points (known as Worley points), then calculating the closest distance to the nearest Worley point for every pixel. It’s helpful to reduce the computational demand by dividing the bounding box into a grid of nine cells and only adding one Worley point to each cell. This process limits the number of “nearest point” calculations for each pixel to just 8 in 2D, and 26 in 3D. Having the distance from each pixel to the nearest Worley point provides the framework for generating the overlapping circular shape of a cloud’s edges, but in order to create seamless cloud textures that don’t abruptly end if the camera position changes, the Worley point formation is “wrapped”. This is done by duplicating the bounding box (grid cells and Worley points included) until the original is surrounded (by 8 clones in 2D space, and 26 in 3D space), then using adjacent Worley points in the clones in the nearest point calculation.

White dots: Cell boundaries

White Dots: Density

Worley noise provides decent outlines for the clouds, but is rather lacking when it comes to texture. To populate Worley cloud scaffolds, Perlin noise (which is a type of gradient noise often used for textures) can be helpful. Smooth noise is another option. After trying both, smooth noise was chosen for this project. In 2D, the smooth noise generation process involves trilinear filtering on every pixel. Extending the technique to 3D space was a design choice made to make the clouds appear more realistic.

Then, Worley and smooth noise was combined using the scale function described in Fredrik Haggstrom’s “Real-time rendering of volumetric clouds” thesis.

Raytracing

At this point, a cloud-looking texture is passed into the shader. Inside the shader, texture is placed in a rectangular shape. At first my team tried outputting the texture on the surface of the rectangle. This resulted in a following output (image on the left below):

Wrong Implementation

Correct implementation

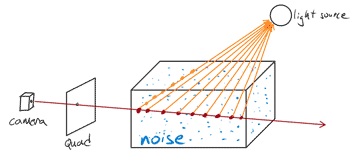

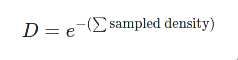

A better approach to this problem is to use a quad implementation (image on the right above). The idea is to shoot a ray from the camera at the quad intersecting the texture container. For each ray that intersects the texture – sample that texture with an adjustable sample_rate. Calculate the output pixel output onto the quad using the Beer’s law5. This method properly draws the clouds onto the scene and enables manipulation of the cloud placement, cloud size, and point of view.

Raymarching

Next step was to add light to the clouds. In order to do this, as the ray pierces through the texture: for each sample point, another ray is shot towards the light source, adding up the light landing at every texture point between the light source and sample point. This gives an overall light estimation for every sample point on the ray. Combining light for every sample point gives the total light output for that pixel on the quad.

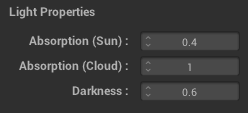

The Henyey-Greenstein equation is useful in adjusting the light angle falling on our cloud. Additionally my team placed a variety of scalar adjustments in graphical user interface to help with troubleshooting and dialing in the settings.

At this point the clouds were starting look really good and required only minor tweaks.

Performance and compatibility note

OpenGL has been depreciated by MacOS. Many of the OpenGL functions that would enable quicker texture generation via GPUs are only found in the latest versions of OpenGL. To maintain compatibility with Apple devices, compute shaders were not used. All of the texture generation is done on the CPU. OpenMP was used to parallelize parts of the process which is still significantly slower than compared to a computer shader.

Contributions and special mentions

This project is a fork of my original work6 in Computer Graphics course at University of California, Berkeley (UCB). I would like to extend special thank you to my team and UCB Computer Science Department staff who made this project possible and fun.

In the Spring of 2021, my team & this project was selected as the top showcase winner out of almost 70 teams competing against us7.

Results

This project achieved my teams original goal to render volumetric clouds adjustable in size and placement. Additionally the GUI enables variety of additional adjustments — such as light location, sample rate, and scale — in real time. Lastly, the point of view is also adjustable.

References

- Olajos, Rikard. (2016). Real-Time Rendering of Volumetric Clouds [Master’s thesis, Lund University]. ISSN 1650-2884 LU-CS-EX 2016-42. https://lup.lub.lu.se/luur/download?func=downloadFile&recordOId=8893256&fileOId=8893258

- Häggström, Fredrik. (2018). Real-time rendering of volumetric clouds [Master’s thesis, Umeå Universitet]. https://www.diva-portal.org/smash/get/diva2:1223894/FULLTEXT01.pdf

- Lague, S. [Sebastian Lague] (2019, October 7). Coding Adventure: Clouds [Video file]. YouTube. https://www.youtube.com/watch?v=4QOcCGI6xOU

- Vandevenne, L. (2004). Lode’s Computer Graphics Tutorial. Texture Generation using Random Noise. https://lodev.org/cgtutor/randomnoise.html

- Multiple Editors (2021). Beer-Lambert Law. Wikipedia. https://en.wikipedia.org/wiki/Beer%E2%80%93Lambert_law

- Zachary Y, Roman T, Callam I, William D (2021). Cloud Renderer Project. https://184clouds.xyz/

- Course Staff (2021). CS184/284A Final Project Showcase. UC Berkeley course web site. https://cs184.eecs.berkeley.edu/sp21/docs/final_showcase